Each layer performs a specific operation on the input data and transforms it into a new representation. In Pytorch, a neural network model is typically composed of layers. Why Do We Need to Iterate Over Layers in Pytorch? Pytorch provides two main features: a Tensor library for fast numerical computation and a deep neural network library that provides tools for building and training neural networks. It is primarily used for developing deep learning models and is designed to be efficient and flexible. Pytorch is an open-source machine learning library based on the Torch library. In this article, we will explore how to iterate over layers in Pytorch. When working with neural networks, it is often necessary to iterate over the layers of a model. Pytorch is a popular deep learning framework that provides a flexible and efficient way to build and train neural networks. ], requires_grad=True)| Miscellaneous How to Iterate Over Layers in PytorchĪs a data scientist or software engineer, working with deep learning models is a common task. Linear(in_features=3, out_features=2, bias=True) (0): Linear(in_features=3, out_features=2, bias=True) Tensor(], grad_fn=)ģ Inputs, 2 outputs and Activation Function

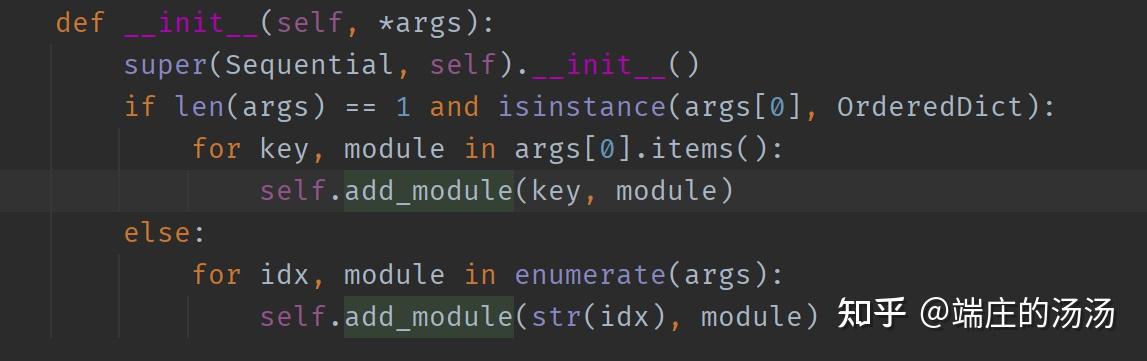

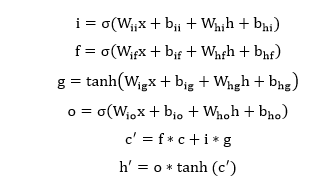

Linear(in_features=2, out_features=3, bias=True) (0): Linear(in_features=2, out_features=3, bias=True) Tensor(, requires_grad=True)Ģ Inputs, 3 outputs and Activation Function Linear(in_features=2, out_features=2, bias=True) (0): Linear(in_features=2, out_features=2, bias=True) in the following illustration indicates the Sigmoid activation function. The above illustration can be converted to a little bit different form that is used more often in neural network documents. Print('Sigmoid(w x + b) :\n',torch.nn.Sigmoid().forward(o))įor practice, let's try with another examples of input vector.Ģ Inputs, 2 outputs and Activation Function O = torch.mm(net.weight,x.t()) + net.bias One is to verify the result of forward() function and clarify your understanding on how the network forward processing works. You can evaluate the network manually as shown below. Print('net.forward(x) :\n',net.forward(x)) You can evaluate the whole network using forward() function as shown below. Print('Activation function of network :\n',net) You can get access to the second component as follows. Linear(in_features=2, out_features=1, bias=True) => Network Structure of the first component : Print('Weight of network :\n',net.weight) Print('Network Structure of the first component :\n',net) You can get access to each of the component in the sequence using array index as shown below. (0): Linear(in_features=2, out_features=1, bias=True) You can print out overal network structure and Weight & Bias that was automatically set as follows.

It can be converted to a little bit different form that is used more often in neural network documents. The above illustration would be easier to map between Pytorch code and network structure, but it may look a little bit different from what you normally see in the textbook or other documents. To use nn.Sequential module, you have to import torch as below.Ģ Inputs, 1 outputs and Activation Function NOTE : The Pytorch version that I am using for this tutorial is as follows.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed